(Part 1 of 3)

A brain, and in fact the entire nervous sytem of any animal, is made out of neurons, specialized cells that work like tiny little processors. There are lots of types of neurons, but they are all more alike than different. In general, they are pattern-matching machines; they have a set of inputs and if those inputs match certain patterns they fire a pulse on their single output. Our bodies have neurons all through them, not just in the brain. The "nerves" in your fingers are neurons. Neurons sense smells and tastes. Neurons send pulses to your muscles to make them flex. And all of these input and output neurons are connected to the brain by bundles of other neurons, including the spinal cord. In fact, some processing goes on "locally" outside the brain, especially in the spinal cord. When the doctor taps your knee with a hammer to test your reflextes, that reflex is an action controlled outside the brain, mostly in the spinal cord. They are mostly arranged as "feedback control systems" similar to the cruise control of your car.

Differnet species of animals, depending on how complex and intelligent they are, have more or less neurons. Humans are at the upper end of complexity (and supposedly intelligence) and we have around a hundred billion neurons. That's a lot. We won't be putting a hundred billion in our Arduino. Maybe a few dozen. A hundred billion will be left as an exercise for the reader.

All of these neruons are connected together in various ways, hence the name "network." Those connections are not usually simple patterns, and that is perhaps the biggest difficulty in making a brain. Mapping the connections of a hundred billion neurons in a working brain is difficult; the owner of the brain gets a bit upset if you take a scalpel and a microscope to his head. A typical neuron might have a hundred connections. That means a human brain has something liek ten trillion connections. And they go all over the place, not just to nearby neurons in nice, neat arrangements.

But that is the essence of what a neural network is. It is a great big heaping bunch (usually) of neurons connected together. Let's build one.

Since a brain is made of neurons, we need to have an understanding of what neurons are and how they work. This will be a simplified, basic introduction. Some details will be missing and some descriptions will be simplified. But overall the description should be pretty accurate. At least accurate enough for us to make our own.

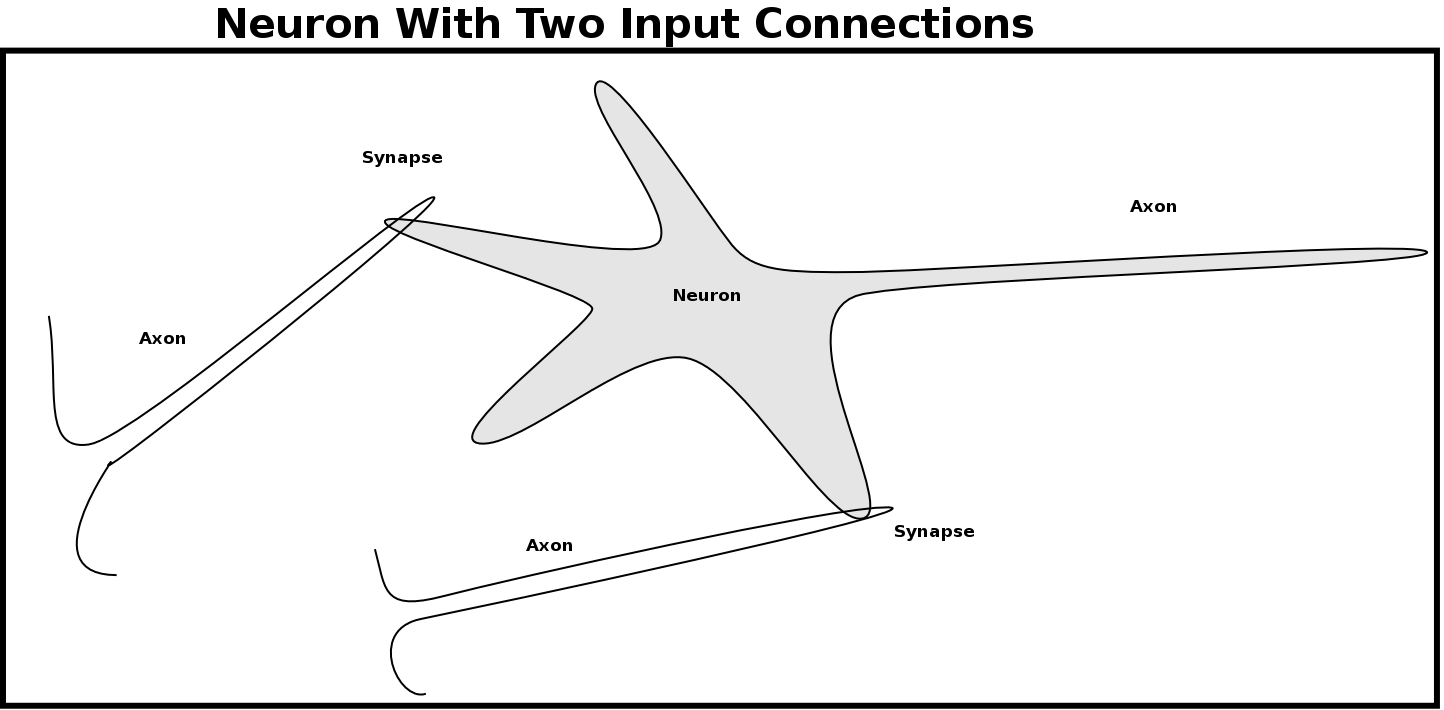

As I mentioned, a neuron is basically a pattern matching device. The pattern it will recognize can be "programmed" into it. We will see how in a minute. The neuron has a single output, called an "axon." When it matches its pattern, a short pulse is sent out on the axon. The neuron also has inputs, called "synapses." A synapse is where the output axon of one neuron touches the body of a different neuron. That connection is the synapse. Each neuron can have a variable number, from one to hundreds, of these synapses. In many cases these synapses, or inputs, are simply a connection to the axon (output) of another neuron. An axon may be short or very long and can form a synapse (connection) with one or up to hundreds or even thousands of other neurons.

Let's look at how the neuron is put together. A real neuron is a living cell, made out of chemicals and proteins and other icky stuff. I got kicked out of chemistry class when I caught the lab on fire with the bunsen burner, so I'm not going into the details of how the chemistry works. Instead, we will just look at the result of what all that chemistry accomplishes.

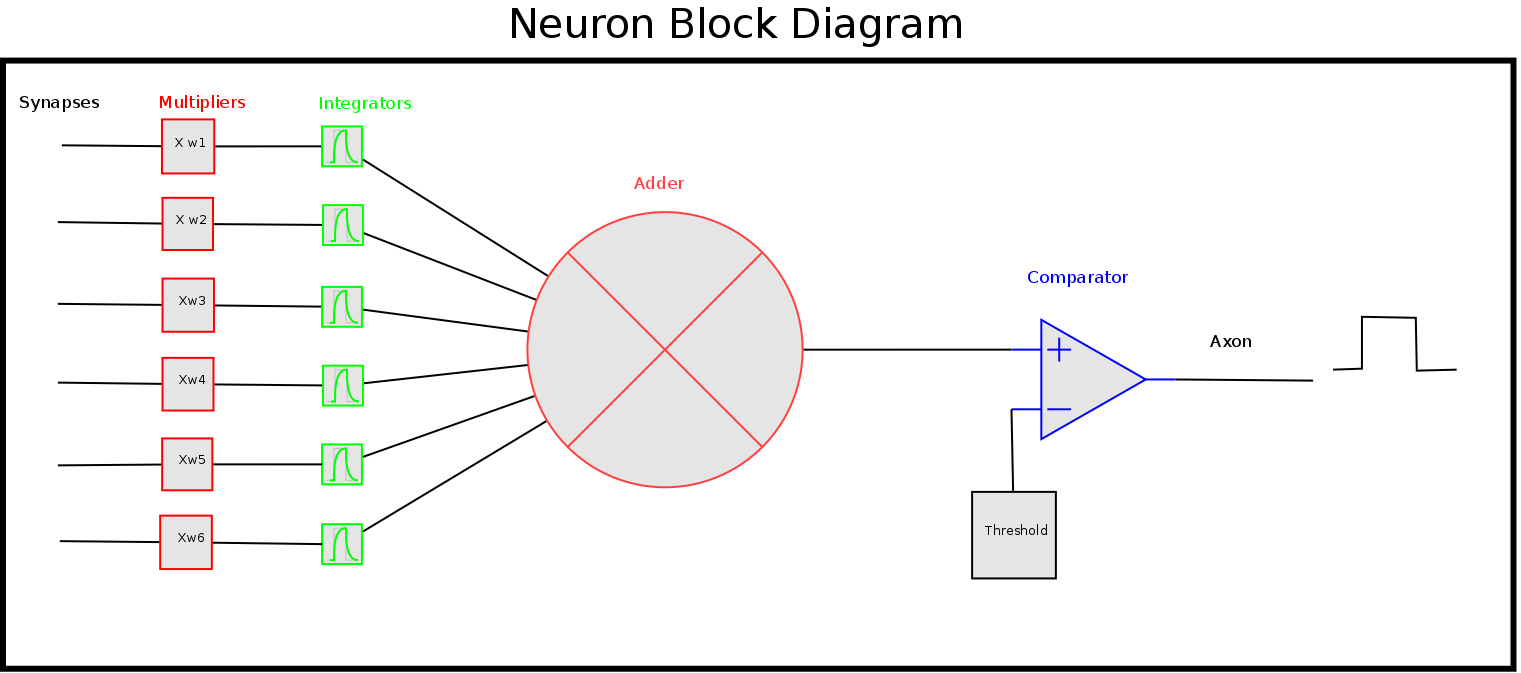

Each synapse has a "weight" value. The incoming pulse on that synapse is considered to have a value of 1. It is a pulse lasting a short time, perhaps a millisecond. Each time a pulse comes in on that synapse, it is multiplied by the weight of that synapse. The weight may be either positive or negative.

Here is a picture of neuron. You can see I didn't do any better in art class than I did in chemistry.

All of the input values are multiplied by their respective weights and then added together. Remember that some of the weights may be negative, so that value will decrease the sum. Finally, the neuron as a whole has a "threshold" value. If the sum of all the inputs multipled by their weights is greater than the threshold, the neuron will fire a pulse on its output axon.

Here is an engineering model diagram. I like this better.

Now, that is not a complete picture of a real neuron. But it is the model that is often used in many neural networks. But Mother Nature has a trick up her sleeve that is often left out of simpler neuron models. However, as many famous scientists have pointed out, Mother Nature always takes the simplest way that will work. So, if Mother Nature felt it necessary to put this trick in, we should too. Let's look at that.

What is this little trick Mother Nature added to the neuron? Well, in the model above all the inputs must happen simultaneously. That is kind of like your computer where a clock pulse determines when anything can happen. But in the real world, things are not synchronized to a clock pulse. Neurons can fire at any time (they are "asynchronous.") Inputs can happen at any time. If you step on a hot coal you don't want to wait around for a series of clock pulses to transfer that information from one step to the next; you want to know NOW. So the neuron pulses aren't synchronized. How can we still compare the patterns if the inputs aren't happeing at the same time? Memory!

Each synapse has a storage element. basically it is like a capacitor that charges up when an input pulse happens, then slowly discharges to nothing unless another pulse comes in to recharge it. That way, if two pulses come in at slightly different times, they can still be matched as a single pattern.

Here is the engineering diagram with the storage added.

That covers most of what we need to know about neurons. They take each input and multiply it by a weight value. That multiplied input is stored temporarily and all the stored values (positive or negative) are added together. If that sum exceeds a certain stored threshold value a pulse is fired. Pretty simple, yet capable of some fantastic feats.

We've got an idea of what a neural network is: a group of neurons connected together. We have looked at what a neuron is and how it works. Now it's time to put the neurons together into useful networks.

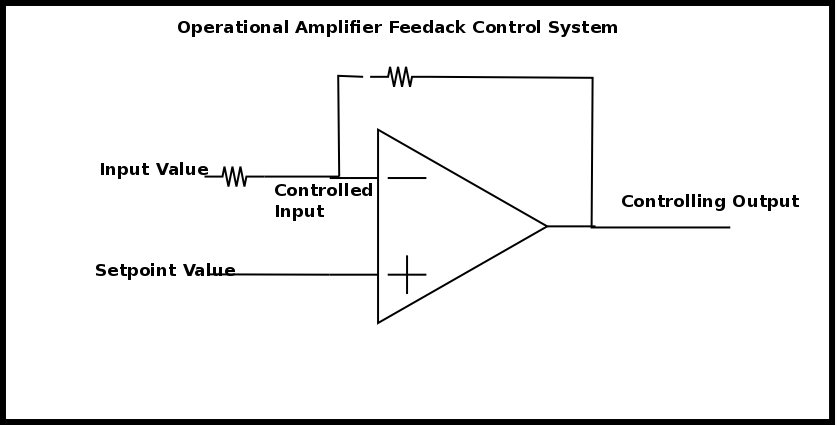

It turns out that much, if not all, of the nervous system is a "control network." Have you ever added a PID (Proportional, Integral, Derivative) algorithm to a robot or other system? Perhaps you used PID to regulate the speed of wheels, or the distance traveled. If you have used a 3d printer, PID is normally used to regulate the head temperature. PID is a type of feedback control network. An operational amplifier is another type. The main characteristics of these feedback control networks is that they are given a "setpoint" and the output changes in an attempt to get the input to match the setpoint.It's important to realize that the INPUT is being controlled, not the output. The output is simply the means used to control the input. That's a bit confusing, so let's look at an example.

Here is a simple feedback amplifier circuit (operational amplifier.) It has two inputs: an inverting input (marked with -) and a non-inverting input (marked with +.) If we put some setpoint voltage on the inverting input the amplifier will change the output until the non-inverting input matches the inverting input. It doesn't care what the output is, only that the output causes the two inputs to match. By varying the resistors in the amplifier circuit, the output required to match the two inputs will change.

And that is how the nervous system works. The neurons are connected in patterns that form a large network of simpler feedback control systems. The neurons try to output pulses that cause the input patterns to match. Some of these control systems are rather simple and some are more complex. And they are put together in layers, with setpoints being determined for one control system from the output of another control system, perhaps in a different layer. I think the current estimate is a human has 11 layers. You can see it gets complex really fast. We won't build 11 layers like a human has. I'm thinking more like a garden slug, maybe two or three layers. We'll see.

That concludes part 1. We have seen what we need to do to build our brain. The next step will be to write a program for the Arduino to make that happen. That will be coming soon in part 2. Once we have written the code we will have our brain, but it won't be able to do anything. It will still need to be "programmed" to accomplish some task. We will finally get to that in part 3. But we have to be careful; we don't want the Arduinos becoming too intelligent and taking over the world!